We had an interesting discussion in my NLP class this week regarding the growing use of AI chatbots as therapists. (My regular news magazine The Week had a “briefing” article on this recently.) As you may be aware, Americans are increasingly turning to AI chatbots for counseling and comfort, replacing human therapists. This includes both apps dedicated to the purpose (like Wysa and Youper) and plain-old regular-purpose AI’s like ChatGPT. The reasons for this trend are in part driven by health-care costs: professional therapy is simply unaffordable for some people, and this group may grow in size due to the unpredictability in the U.S. health care system.

I framed this question to the class in terms of the costs vs. benefits of the technology, and asked discussion groups to brainstorm and report pros and cons. Then I asked for a show of hands, point-blank, to the effect of: “generally speaking, do you think this is a good technology, or should it be avoided?” I wasn’t surprised when every hand went up saying it should be avoided.

Are you surprised that I wasn’t surprised? After all, it’s natural to assume that young people would be the first to embrace alternate technologies, and to voice the fewest objections to the traditional status quo. But I’ve discovered that of all the people I talk to about AI, the ones most concerned about its proliferation are precisely these young people. I call them “young Luddites” in jest, but many of their arguments are quite principled. The most common refrain is “it’s dangerous to outsource function X to a computer, and you simply can’t expect it to have the same competence as a human expert.” I’m inclined to agree in many cases (medical diagnoses, judicial sentencing of convicted criminals, high-stakes policy-making), but it’s interesting that so many objections arise from the Gen-Z folks.

It was the students’ turn to be surprised, I think, when I pushed back on their pushback. I actually think that used properly, therapy could be a terrific application for AI, especially in light of the prohibitive mental health costs many are facing. To argue this, I asked students to compare two scenarios:

- A close friend of yours says they’ve been told they’re tangled up in codependent relationships. He or she checked out therapy options, which were inconvenient and costly, and then on a whim went to their local bookstore to browse books about codependency. “Wow, this book I found was amazing!” the friend says. “I learned so much about myself. I’m already making meaningful changes, and I’ve never felt so in control of my life!”

- Same as 1, except substitute “AI chatbot” for “book at the bookstore.”

The students’ visceral reaction against scenario 2 largely evaporated when switching mentally to scenario 1. It’s interesting to consider why.

The purpose of going to therapy is presumably to confront your current thoughts and behaviors, unpack the reasons behind them, and make positive changes in your life. Now most of that is processing information (from your therapist) and interactively discovering new possibilities. So it doesn’t surprise us in the least that some people might successfully learn these things from a book addressing their area of struggle. (Why else would books like that be written or sold?) But the fluid, dynamic nature of an AI conversation — which actively probes the user with questions and adapts to their individual responses — could well be even better, no? If pre-packaged information by a knowledgeable author can help us mentally, then how much more so a custom-tailored delivery by something that has all psychology knowledge ever published at its fingertips?

Disentangling the class’s objections to this unveiled some interesting things. Their first counterarguments were of the form: “but books are reliable, and ChatGPT is not.” I believe this stems partly from the misconception (common to many young adults, it seems) that “if it’s in print, it must have been fact-checked and sanctioned and authorized by important people.” This assumption is usually pretty easily debunked.

It also stems from the fact that ChatGPT’s bigger mistakes tend to go viral and get cemented in people’s minds as prototypical. People who don’t actually use it much seem to assume it’s wrong about every other sentence. Is this really true? I personally use ChatGPT every single day, on a variety of topics, and on balance I take everything it says with pretty much the same-sized grain of salt I would take anything an average human says. This isn’t to say ChatGPT is always right, or even reliably right…but comparing to human fidelity is honestly a pretty low bar.

Evidence is mixed on the question of human vs. ‘bot correctness (see, e.g., Herbold et al 2023, Ghehsareh et al 2025, Krichevsky et al 2025), and of course it’s a moving target given the pace of AI advances. But it seems to me a stretch that the statement “humans simply give more reliable answers than AIs do” will last for much longer, if it’s even true now.

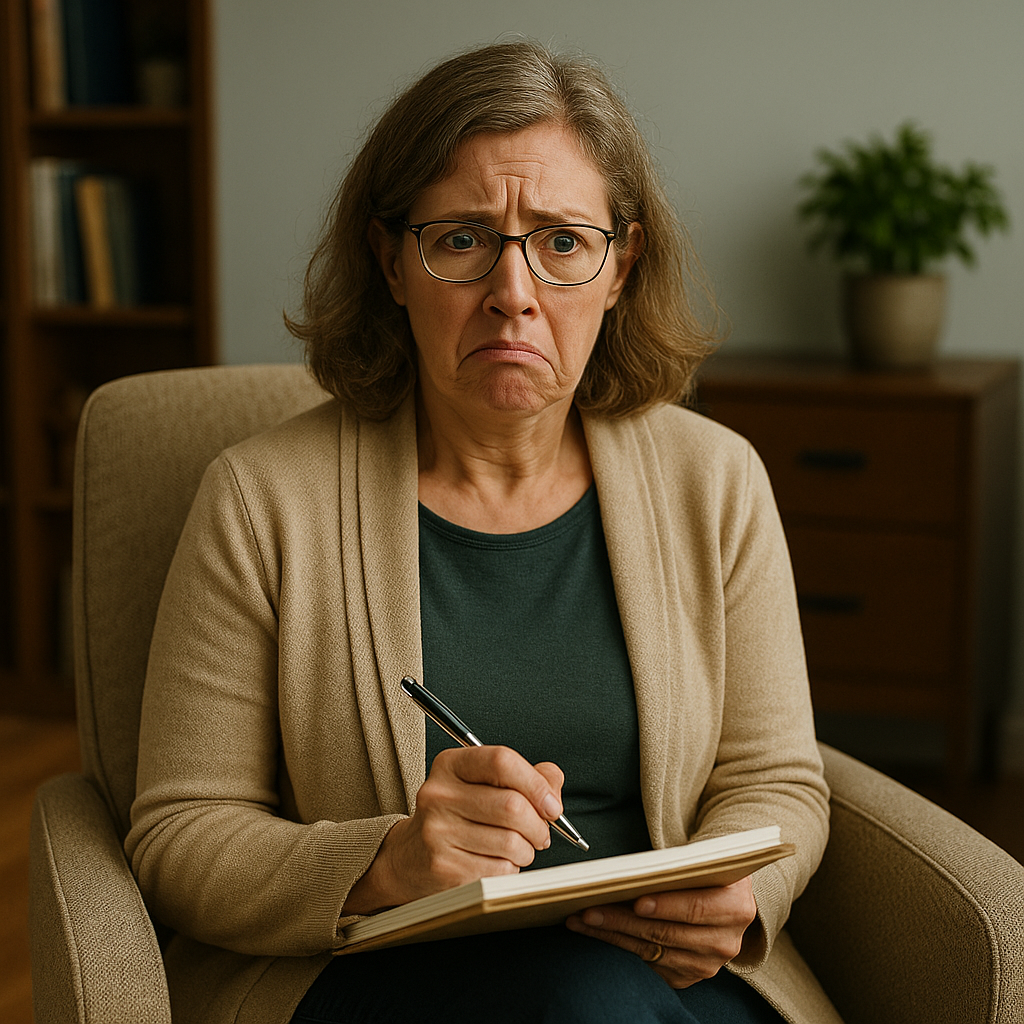

I’m sure that the average professionally-trained therapist today is going to give superior advice straight-up against a ‘bot. But there are some pretty sucky therapists out there too. And human therapists, being human, suffer from the same biases and ruts we all do. Ask yourself: at your own job, what percent of the time do you give the best possible answer? Or even a pretty good answer? This will vary widely depending on what you do, but in many fields I don’t think an average practitioner would come out shining in a competition with a well-trained, RAG-equipped AI. Just a hunch.

The students’ other (and to me more convincing) point had to do with the way in which humans interact with books vs AI. Students argued, in effect, that when using AI a person is much more likely to “outsource the entire decision-making process” to it than they are when reading. In other words, since the AI is helping guide the conversation, presenting concrete alternatives for your specific situation, etc., it’s allegedly easier to just surrender one’s whole will to it. “Yeah, okay, you’re right, I believe you. Now just tell me what to do and I’ll do it.” Whereas when reading, this story goes, it’s more likely that the person will gather information thoughtfully and critically evaluate it.

There’s probably research in this area I’m not aware of. As of now, I’m not sure to what degree this alleged difference is real. Do people really critically evaluate material from books more deeply than that given by a ‘bot? I’m not sure. And I’m even less sure that an AI therapist would be more dangerous than a real human counselor would. After all, if your credentialed and confident professional is telling you what you should do, aren’t you just as susceptible to accepting the advice uncritically?

One last thought for today, which also surprised the students when I stated it: to me, the question of AI therapists is in a completely different realm than the question of AI companions. By “AI companionship” I mean pursuing a friendship, or even a romantic relationship, with a ‘bot. (For examples, see apps like Replika, or Talkie AI.) If this idea seems far-fetched to you, consider that a recent study claims to have found that 72% of U.S teenagers have tried AI companions, with 52% self-identifying as regular users. This, it would appear, is not an area where young Luddites abound.

The reason these two applications seem completely different to me — and why I’m pro-AI-as-therapist but anti-AI-as-companion — is that the very purpose of a relationship is to have…well, a relationship. If you’re faking a relationship by simply mimicking the stimuli that would occur in a real relationship, you’re ultimately deceiving yourself about what is even happening. Whereas the purpose of therapy is not to have a relationship with one’s therapist, but (as I described above) to learn and apply ideas.

I’m not claiming the user’s feelings about their companion aren’t real feelings, only that what those feelings point to is an illusion. At the end of the day, you have to ask, “am I in the relationship because this person makes me feel a certain way?” If the answer is yes, then I suppose it might make brutal sense to replace the actual person with something that artificially stimulates and produces those feelings. One student approached me after class, in fact, and said that in his experience, most romantic relationships between college-age people are about that very thing; one ultimately doesn’t care about one’s partner, only the feelings that partner produces.

Without sounding too judgy, that doesn’t really sound like a “relationship” at all to me. It reminds me of someone who told me once that he actually didn’t know — or much care — whether a God actually existed or not; he simply derived comfort from praying and pretending that one did. It’s like saying it doesn’t matter whether anyone is on the other end of the phone line or not, only that your ear hears certain sounds. Capitulating to this idea seems to me the ultimate expression of selfishness: “I’m literally the only one who matters here, and the other person (if there even is one) is simply my tool.”

Interested in others’ thoughts.

— S

Gao, Y. N., & Olfson, M. (2025). High Out-of-Pocket Cost Burden of Mental Health Care for Adult Outpatients in the United States. Psychiatric Services (Washington, D.C.), 76(2), 200–203.

Herbold, S., Hautli-Janisz, A., Heuer, U., Kikteva, Z., & Trautsch, A. (2023). A large-scale comparison of human-written versus ChatGPT-generated essays. Scientific Reports, 13(1), 18617.

Krichevsky, B., Engeli, S., Bode-Böger, S. M., Koop, F., Schulze Westhoff, M., Schröder, S., Schumacher, C., Pape, T., Stichtenoth, D. O., & Heck, J. (n.d.). Human vs. artificial intelligence: Physicians outperform ChatGPT in real-world pharmacotherapy counselling. British Journal of Clinical Pharmacology, e70321.

Mahmoudi Ghehsareh, M., Asri, N., Azizmohammad Looha, M., Sadeghi, A., Ciacci, C., & Rostami-Nejad, M. (2025). Expert evaluation of ChatGPT accuracy and reliability for basic celiac disease frequently asked questions. Scientific Reports, 15(1), 29871.

Siddals, S., Torous, J., & Coxon, A. (2024). “It happened to be the perfect thing”: Experiences of generative AI chatbots for mental health. NPJ Mental Health Research, 3, 48.

The Behavioral Health Care Affordability Problem. (2022, May 26). Center for American Progress. https://www.americanprogress.org/article/the-behavioral-health-care-affordability-problem/

Leave a Reply to Kenzie Lotz Cancel reply